This article contains AI-assisted content and has been reviewed and published by a human editor.

Why the new chatbot privacy study matters for marketing

The International Association of Privacy Professionals published analysis of a major academic study on consumer chatbots in early March 2026, arguing there is a structural privacy gap between what users expect and how providers actually reuse chat data — and proposing a design-forward fix called “Sealed Mode.” Read the study summary for details within the IAPP coverage.

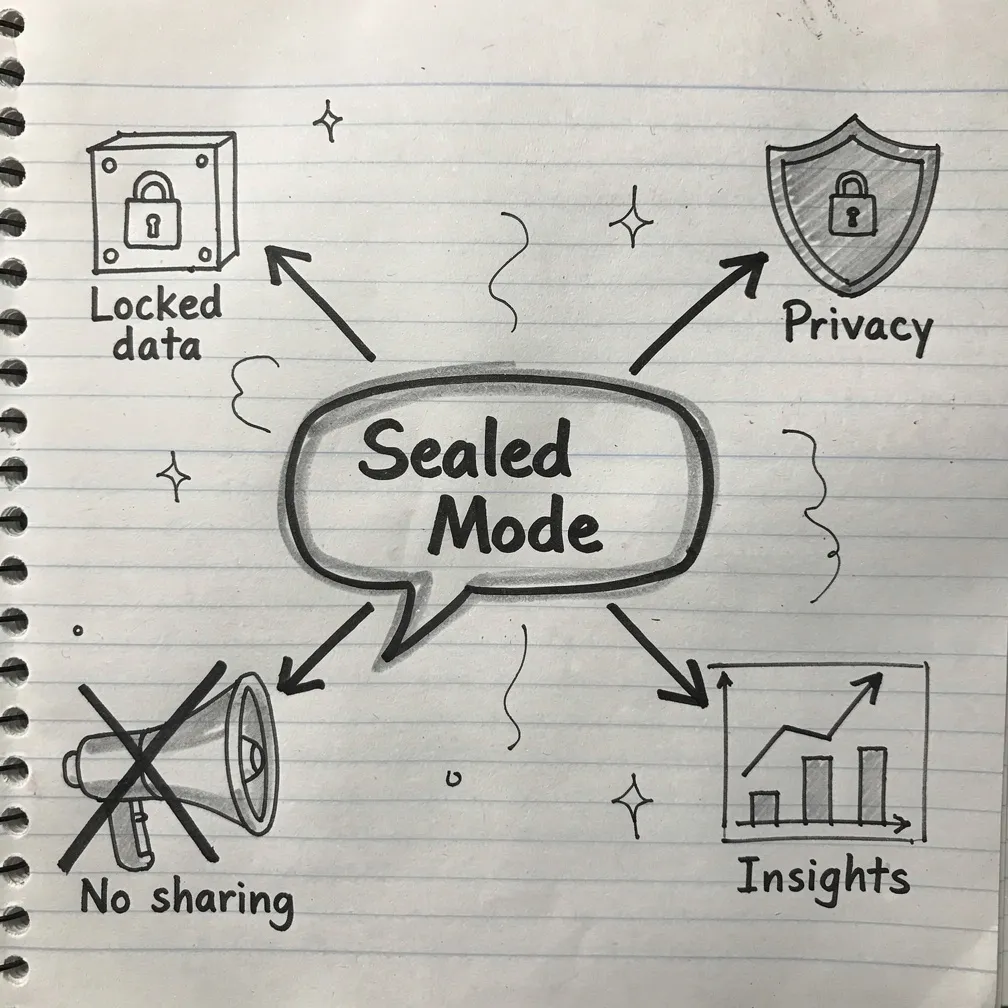

What is Sealed Mode?

Sealed Mode is a privacy-by-design suggestion that creates a clearly labeled lane — for example, “Health & Wellbeing — Sealed Mode” — where conversations are handled under stricter defaults: no training use, siloed personalization, no ad surfaces, short retention, limited human access and stronger governance. The goal is architectural protection rather than a user-facing warning alone.

Advertising is already in the chat channel

Providers and advertisers are moving quickly: OpenAI began testing ads within ChatGPT for logged-in U.S. users on Free and Go tiers earlier in 2026, which makes the IAPP study’s recommendation to separate ad signals from sensitive conversations especially relevant for marketers who rely on conversational signals for targeting or measurement. OpenAI’s testing announcement highlights how ad personalization choices are being built into consumer chat products.

Three concrete implications for marketing teams

1) Data sourcing and consent: If Sealed Mode (or equivalent controls) becomes an industry norm or regulatory expectation, marketers that ingest conversational-derived signals will need to verify consent, retention terms and whether sealed-lane content is excluded from improvement or targeting pipelines.

2) Targeting and creative: Ads or personalized messages that rely on chatbot memory or recent chats could be blocked from sealed lanes; brands should avoid assuming conversational inputs are always available for profiling or dynamic creative selection.

3) Measurement and auditability: Marketers should expect stronger event logging, recipient transparency and audit requirements for any pipeline that uses chat text for attribution or model training — the IAPP recommendations specifically call for separating operational uses from improvement uses and providing clear interfaces for recipient transparency.

Practical checklist for short-term action

- Audit any ingestion points that pull conversational or assistant memory data into ad targeting or analytics.

- Update privacy notices and advertiser contracts to describe whether chat-derived signals are used for training, targeting or measurement.

- Segment test plans: run experiments that exclude conversational signals to measure lift without potential sealed-lane data.

- Coordinate with legal and privacy teams to map obligations under GDPR/EU AI Act guidance if conversational advertising is part of your strategy.

Who in the privacy world is paying attention?

Notable X account: @peterswire (Peter Swire). Swire — a respected privacy scholar and former government privacy official — publicly flagged the study as essential reading for builders, regulators and companies working with consumer chat, urging careful attention to the paper’s recommendations. The author of the IAPP piece also acknowledges Swire’s input on earlier drafts.

What marketers should brief leadership on now

Brief your CMO and privacy lead that the conversation about chatbot privacy has moved from warnings to architecture: regulators and standards bodies are already discussing verifiable technical controls, and the study’s Sealed Mode is a concrete design that could influence product defaults and advertising rules. If conversational channels are part of your data stack, prepare to explain how you would stop using sealed-lane signals for targeting or measurement on short notice.

Conclusion

Marketers should treat the IAPP-backed study and its Sealed Mode proposal as a near-term signal: conversational AI is becoming an advertising surface, but not all conversations are equal. Audit inputs, update policies, and design experiments that assume sensitive chats will be off-limits for targeting — doing so protects customer trust and reduces regulatory and brand risk.